In a groundbreaking leap for neurophotonics and biomedical imaging, researchers from South Korea and the United States have unveiled a novel event-based optical imaging framework capable of capturing in vivo neuronal and vascular dynamics with unprecedented temporal resolution and data efficiency. This pioneering work transforms the utility of event cameras—traditionally employed in high-speed motion detection for robotics and autonomous vehicles—into a powerful tool for detecting subtle fluorescence changes tied to blood flow and neuronal activity within the brain. Their findings, recently published in PhotoniX, herald a paradigm shift in functional brain imaging technology by demonstrating that neuromorphic sensors can be finely tuned and computationally reconstructed to reveal intricate biological processes at kilohertz speed across large fields of view.

Event cameras diverge fundamentally from conventional frame-based imaging systems: rather than capturing entire scenes at fixed frame intervals, these sensors detect and report asynchronous changes in pixel brightness with microsecond precision. Historically, this capacity made them invaluable for capturing fast-moving objects and complex edge dynamics but left their application to biological systems with subtle intensity fluctuations an open and challenging question. The team led by experts at Seoul National University’s Neurophotonics Lab and the NICA Lab at KAIST, alongside collaborators from GIST, developed a comprehensive methodology to harness the nuanced, low-amplitude signals present in cortical vasculature and neuronal calcium activity by exploiting the unique properties of event-based sensing combined with advanced computational reconstruction.

Central to this research was a rigorous sensor characterization performed under conditions emulating biological functional signals rather than typical motion-induced brightness changes. The researchers meticulously quantified how the event camera detects minute and slowly varying fluorescence variations, mapping the sensor’s temporal precision and noise profile under these specialized imaging conditions. This quantitative calibration was critical to translate the binary “events,” which signify only instances of brightness increase or decrease at a pixel, into scientifically meaningful analogs of physiological signal dynamics such as blood flow velocity and neuronal calcium transients.

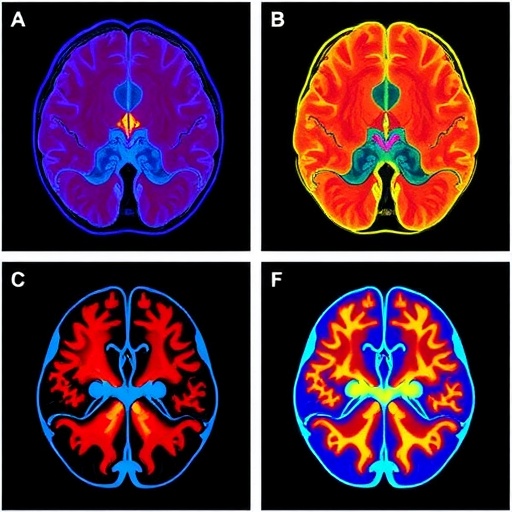

The practical utility of this approach was validated through in vivo experiments involving anesthetized mice prepared with cranial windows to expose the cortical vasculature. The team demonstrated that event cameras can capture vascular dynamics at an effective acquisition speed of 1000 Hz, a temporal scale previously unattainable without overwhelming data volume or sacrificing field of view. Widefield fluorescence images acquired using traditional sCMOS cameras provided a benchmark, while the event streams uncovered rich temporal information encoded in positive and negative brightness fluctuations induced by red blood cell passage through microvessels. This capability enables researchers to track rapid hemodynamic changes more precisely than before, opening new doors for the study of neurovascular coupling and cerebral blood flow regulation.

Beyond vascular imaging, the investigators extended their event-based framework to neuronal calcium activity monitoring, both in cultured neurons and in living mouse cortex. However, the inherently asynchronous, binary nature of event data posed analytical challenges, as neuroscientific paradigms typically rely on continuous fluorescence intensity traces expressed as ΔF/F₀. To surmount this obstacle, the team engineered an innovative self-supervised machine learning algorithm dubbed Implicit Neural Factorization (INF). This approach leverages the precise timing of event occurrences to infer continuous functional images from sparse event streams without the need for paired “ground truth” frames. INF reconstructs smooth and temporally resolved ΔF/F₀ images, facilitating direct comparisons with conventional imaging while retaining the data and speed advantages of event-based recording.

This synergy of sensor calibration, biological in vivo validation, and advanced unsupervised computational reconstruction constitutes a pivotal advance in functional optical imaging. By circumventing the data redundancy endemic to frame-based cameras, event cameras coupled with INF hold great promise for scaling high-speed functional imaging to larger fields and finer temporal resolutions unattainable by traditional means. This is especially salient for imaging applications involving genetically encoded voltage indicators or voltage-sensitive dyes, where millisecond-scale dynamics impose stringent demands on acquisition speed and data throughput.

The implications of this work resonate broadly across neuroscience and biomedical optics, suggesting that neuromorphic event sensors can be repurposed from their conventional roles in robotics into versatile instruments for probing neural activity and vascular physiology with remarkable sensitivity and temporal acuity. The study not only establishes the fundamental feasibility of event-based functional imaging but provides a practical blueprint involving sensor calibration protocols and novel neural reconstruction architectures to unlock their potential in vivo.

As the field advances, the integration of event cameras with multimodal imaging and sophisticated data-driven algorithms will likely foster breakthroughs in understanding neurovascular interactions, neuronal circuit dynamics, and the fast signaling mechanisms underpinning brain function. This approach offers a pathway to overcome historical limitations in data rates and file sizes that have constrained large-scale, real-time optical neuroimaging, paving the way for next-generation neurotechnology platforms.

Ultimately, this research epitomizes how the convergence of neuromorphic hardware and cutting-edge computational techniques can catalyze innovation at the intersection of optics, neuroscience, and biomedical engineering. By revealing the rich spatiotemporal structure of biological activity encoded as fleeting brightness changes, event cameras and INF reconstruction provide a fresh lens on the brain’s dynamic landscape, holding promise for both fundamental science and clinical applications where speed and sensitivity are paramount.

The collaborative effort between Seoul National University, KAIST, and GIST, showcased in PhotoniX, signals an exciting new chapter for functional neuroimaging. It underscores the transformative potential of reimagining existing sensor technologies through a biological lens and harnessing the power of machine learning to decode complex physiological phenomena from minimalistic signals. This breakthrough invites a reevaluation of imaging paradigms and inspires future explorations into real-time, high-resolution visualization of brain function across scales and modalities.

Subject of Research: Cells

Article Title: Event-based optical imaging and reconstruction of in vivo neuronal and vascular dynamics

News Publication Date: 25-Mar-2026

Web References: http://dx.doi.org/10.1186/s43074-026-00240-8

Image Credits: Prof. Myunghwan Choi and Prof. Kunyoo Shin at Seoul National University, Prof. Young-gyu Yoon at KAIST, Prof. Euiheon Chung at GIST, PhotoniX

Keywords: Event cameras, neurophotonics, functional brain imaging, vascular dynamics, neuronal calcium imaging, neuromorphic sensors, Implicit Neural Factorization, single-photon imaging, high-speed imaging, data-efficient acquisition, neurovascular coupling, computational reconstruction

Tags: asynchronous pixel brightness detectionbrain activity imagingcomputational neuroimaging reconstructionevent-based optical imagingfluorescence blood flow detectionfunctional brain reconstructionhigh temporal resolution imagingin vivo neuronal dynamicskilohertz vascular imaginglarge field of view brain imagingneuromorphic event-based cameraneurophotonics advancements