In the fast-evolving landscape of automation, the ability for robots to autonomously handle objects with diverse optical properties has long remained a formidable challenge. Robots rely heavily on accurate three-dimensional perception to identify, grasp, and manipulate objects effectively. For transparent or highly reflective items, such as glassware or polished metals, conventional depth sensors falter, rendering many robotic systems ineffective and necessitating human intervention. This bottleneck undermines the promise of seamless automation in sectors ranging from manufacturing to restaurants, where handling a variety of materials is routine.

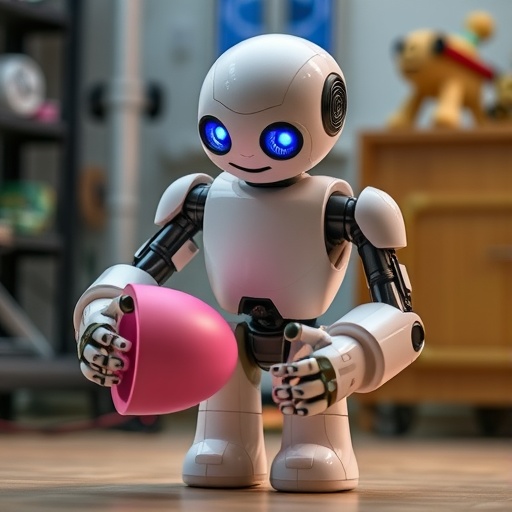

Enter HEAPGrasp: a novel technological breakthrough devised by researchers from Tokyo University of Science in Japan. This innovative approach redefines how robots perceive challenging objects, relying exclusively on the silhouettes—contours visible in RGB images—of the items rather than their optical properties like transparency or gloss. By circumventing the pitfalls of traditional 3D sensing modalities, HEAPGrasp allows robots to measure and grasp items with unprecedented success, even in highly reflective or transparent scenarios where other methods fail.

The core concept underpinning HEAPGrasp is the Shape from Silhouette (SfS) technique, a geometric reconstruction method that estimates an object’s three-dimensional form by intersecting volumes derived from multiple two-dimensional silhouettes captured from different viewpoints. Typically, obtaining these multiple perspectives involves rotating robotic cameras around the scene, which increases computational load and operational time. To optimize this process, the research team integrated a deep learning-driven next pose planner that intelligently determines the most informative viewpoints to reduce redundancy and accelerate the perception pipeline.

The entire system functions with a single hand-eye RGB camera, enhancing its practicality and adaptability. Initially, the system employs a sophisticated semantic segmentation network, DeepLabv3+ coupled with the ResNet-50 backbone, to differentiate object silhouettes from complex backgrounds. This network classifies each pixel of the image into categories such as “object” or “background,” extracting precise contours essential for the silhouette-based 3D reconstruction. This segmentation step is crucial, as it directly influences the fidelity of the subsequent spatial model.

Once silhouette data is extracted, the Shape from Silhouette calculation aggregates these perspectives to formulate a precise volumetric approximation of the object’s shape and spatial position. Unlike traditional depth-based methods, this approach is impervious to problematic optical traits—transparency and reflectivity do not interfere because the system requires only contour information. This makes HEAPGrasp particularly advantageous for materials that traditionally confound robotic vision systems, expanding the range of usable objects dramatically.

To ensure operational efficiency, the next pose planner employs a deep reinforcement learning strategy that selects optimal camera viewpoints to maximize silhouette information gain. This selective viewpoint sampling trims unnecessary camera movements and reduces the total path length the system’s camera must traverse. Experimentally, this innovative approach resulted in a 52% reduction in camera trajectory length and a 19% decrease in execution time compared to baseline methods that employ exhaustive rotational paths around the objects.

The research team rigorously tested HEAPGrasp on a series of real-world robotic grasping tasks involving twenty different scenes, each containing a mix of transparent, opaque, and specular objects. The system achieved a remarkable 96% success rate in grasping these variably challenging items, significantly outperforming current state-of-the-art grasping technologies. This performance leap confirms HEAPGrasp’s robustness and adaptability to real-world industrial environments.

Beyond the evident technical triumphs, HEAPGrasp represents a scalable, practical integration path for existing robotic platforms. Since it requires only a single RGB camera and a computationally efficient silhouette analysis framework, retrofitting industrial robots with HEAPGrasp capabilities is straightforward. This can substantially lower barriers to automation in logistics warehouses, manufacturing plants, food processing lines, and even hospitality sectors, where the rapid and accurate handling of diverse items is essential.

Associate Professor Shogo Arai, one of the principal investigators, emphasizes the system’s foundational principle: “Even when traditional depth sensors falter, the object contours remain consistently reliable. By harnessing these contours, we can reconstruct object shapes accurately and allow robots to grasp them autonomously.” This insight overturns convention in robotic vision, shifting the focus from depth to silhouette-centric perception, which could redefine autonomous manipulation.

The synergy of advanced computer vision techniques, deep learning, and geometric shape reconstruction embodies the multidisciplinary innovation driving HEAPGrasp. Semantic segmentation tackles the complexity of visual clutter, Shape from Silhouette reconstructs the accurate 3D models, and AI-driven next pose planning optimizes efficiency, together forming a cohesive methodology for handling optical diversity. This integrated system is a pioneering step toward fully autonomous robotic material handling capable of operating in visually complex and physically diverse environments.

Given the compelling experimental outcomes and promising operational efficiencies, HEAPGrasp is poised to influence a broad range of future robotic applications. By overcoming long-standing perceptual challenges, it paves the way for safer, more adaptable, and economically viable robotic systems that can function with minimal human oversight. This advancement will likely accelerate automation adoption in industries critically dependent on the manipulation of varied materials.

The work, published in the prestigious IEEE Robotics and Automation Letters and slated for presentation at the 2026 IEEE International Conference on Robotics and Automation, exemplifies leading-edge research with tangible real-world impact. It heralds a new era where robots equipped with active perception capabilities can understand and interact with their surroundings in ways previously thought unachievable.

Looking forward, HEAPGrasp’s framework offers a foundation upon which further improvements can be built, such as incorporating dynamic object tracking, integrating multi-modal sensing, or expanding the next pose planner’s intelligence. The promising prospects signal a transformative leap in robotics, where diverse optical challenges no longer hinder effective machine perception and manipulation.

Subject of Research:

Not applicable

Article Title:

HEAPGrasp: Hand-Eye Active Perception to Grasp Objects with Diverse Optical Properties

News Publication Date:

12-Jan-2026

References:

DOI: 10.1109/LRA.2026.3653331

Image Credits:

Image credit: Associate Professor Shogo Arai from Tokyo University of Science, Japan

Keywords

Robotics, Engineering, Machine Learning, Artificial Intelligence, Computer Vision, Manufacturing, Algorithms, Image Processing, Technology

Tags: 3D reconstruction from silhouettesautomation in manufacturing roboticsautonomous robot graspingHEAPGrasp technology innovationobject handling in restaurants automationovercoming depth sensor limitationsreflective material perception robotsRGB camera object handlingrobot dexterity enhancementrobotic perception without depth sensorsShape from Silhouette techniquetransparent object manipulation robotics