In recent years, protein language models (pLMs) have revolutionized the field of protein engineering, opening new horizons that were previously unattainable through conventional scientific methods. These sophisticated artificial intelligence tools can predict, design, and manipulate protein sequences, potentially crafting entirely new protein structures that nature has never produced. The implications of this technology are profound, with possibilities ranging from creating enzymes that capture atmospheric carbon dioxide to engineering catalysts that significantly reduce industrial energy consumption and environmental waste.

However, despite these monumental advances, a critical challenge remains unaddressed: the opacity of these models’ decision-making processes. Protein language models operate predominantly as black boxes, producing outputs without transparent reasoning to explain how they reach their predictions. This lack of interpretability hinders scientists’ ability to discern whether the models’ forecasts are reliable, unbiased, or safe to apply in practical, real-world scenarios. As these AI systems increasingly influence biotechnological innovations and experimental designs, the demand for explainability has become paramount.

A new perspective published in Nature Machine Intelligence by researchers at the Centre for Genomic Regulation (CRG) dives deep into the realm of explainable AI (XAI) techniques tailored for protein language models. This crucial work explores the current landscape, challenges, and future directions for making these powerful tools more transparent. Notably, Dr. Noelia Ferruz, lead author and group leader at CRG, emphasizes that while the pace of progress with protein language models has been brisk, our biological understanding of fundamental processes like protein folding and catalysis has lagged, exacerbating the trust deficit between AI predictions and experimental validation.

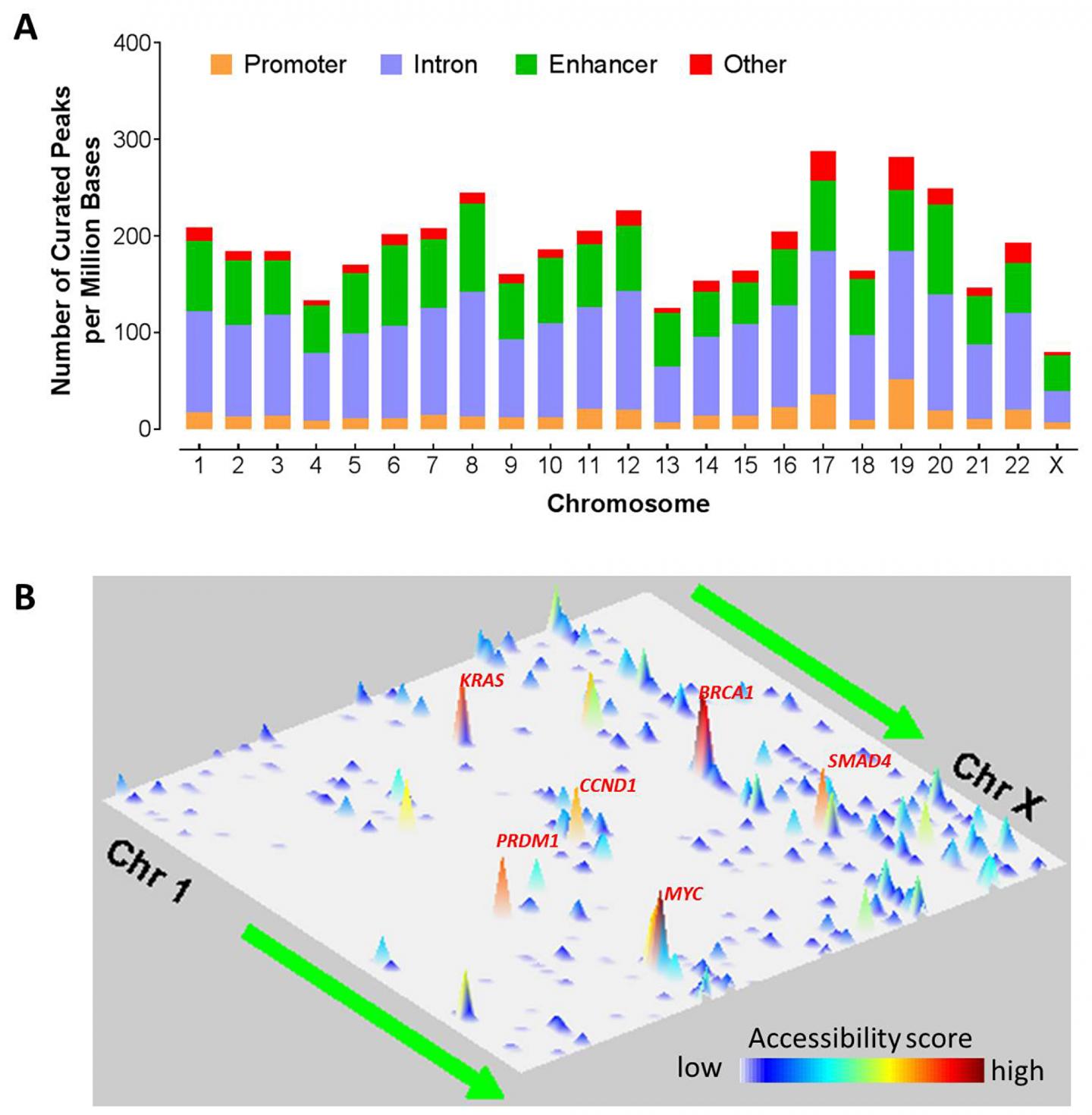

One of the most striking insights from the CRG team’s review is the identification of four integral components along the protein language model’s decision pathway where interpretability efforts can be focused. The first is the training data underlying the model—without a representative dataset, the model might mirror biases or knowledge gaps, particularly concerning human genetic diversity or proteomic variety. Second is the actual protein sequence input, where understanding which amino acids or regions influence predictions is fundamental. Third, the architecture and internal mechanics of these models, including the multi-layered artificial neural networks, must be interpretable to confirm that these systems process biological information correctly. Finally, the model’s response to slight perturbations in input, known as input-output behavior, can reveal how sensitive and robust the model’s understanding of sequences truly is.

The significance of explainable AI in protein research can be categorized into several conceptual roles that reflect the model’s utility and the degree of human-AI interaction. In most contemporary applications, explainability serves as an “Evaluator,” verifying whether the model has learned biologically recognized patterns such as binding sites or structural motifs. While this function is vital for assessing model quality, it falls short of enabling the discovery of novel biological insights or enhancing model architectures fundamentally.

A subset of studies extends beyond mere evaluation, using explainable AI insights as a “Multitasker” to annotate previously uncharacterized proteins or infer additional properties based on learned patterns. This stage points towards more applied assistance from AI, although it still primarily supports existing knowledge rather than generating new understanding. More rarely, researchers have employed explainable AI to act as an “Engineer” or “Coach,” refining model architectures or guiding the design of proteins towards specific, desirable traits by cutting unnecessary model features or highlighting critical regions for modification.

However, the most profound aspiration for explainable AI in protein science is the emergence of the “Teacher” role. This advanced stage envisions AI systems that do not merely assist but actively reveal fundamental biological principles previously unknown to human scientists. Similar paradigm shifts have been witnessed in other domains—AlphaZero’s novel chess strategies or AI-assisted reconstructions of damaged ancient scripts stand as harbingers of such transformative potential. In proteins, this would mean AI uncovering new folding rules, catalytic mechanisms, or interaction patterns that could redefine drug design, materials science, and sustainable technology development.

Achieving this teacher-level explainability requires moving from pattern recognition based on statistical correlations to genuine mechanistic understanding. Dr. Ferruz highlights the dream of controllable protein design, where a user could specify precise requirements—for instance, crafting a protein with a particular shape and activity at a defined pH—and receive not only a candidate sequence but a clear mechanistic rationale for why it would function and why alternatives might fail. Such transparent reasoning would immensely accelerate scientific progress and reduce the risks associated with deploying AI-generated designs in the laboratory or clinic.

Nonetheless, the researchers caution that this trajectory towards trustworthy, transparent, and experimentally validated AI models is not guaranteed. Today’s models, powerful though they are, often lack robustness and can be misled by hidden biases or overfitting. To surmount these limitations, the authors call for a concerted community effort to develop robust benchmarking tools and evaluation frameworks dedicated to assessing the fidelity of explainability methods. Open-source platforms that standardize and democratize explainability analyses would facilitate reproducibility and cross-validation across different labs and research groups.

Crucially, no explanation generated by AI can substitute for rigorous experimental verification. Computational predictions, no matter how compelling, must be corroborated by laboratory experiments that confirm the biological relevance of discovered patterns and hypotheses. This blend of AI interpretability and empirical validation is essential for transforming protein language models from intriguing computational artifacts into dependable partners in biotechnological innovation.

The journey toward transparent, reliable, and insightful protein language models thus embodies a symbiotic collaboration between artificial intelligence and experimental biology. It demands a shift in ethos among researchers, where explainability is regarded not as an optional add-on but as an intrinsic design principle. If successful, this paradigm holds the promise of revolutionizing our capacity to design proteins with unprecedented precision and creativity, ultimately addressing some of the most pressing challenges of our time—from environmental sustainability to novel therapeutics.

In conclusion, protein language models stand at the frontier of biological research and engineering, yet the path to their full potential is inseparably tied to our ability to peer inside their “black box.” Explainable AI represents the critical key to unlocking not just more effective models, but models that teach us new biology and inspire innovations unseen before. As these technologies continue to evolve, they may herald a new era where AI serves both as a generator and elucidator, integrally shaping the future of science.

Subject of Research: Protein language models and explainable artificial intelligence in protein engineering.

Article Title: Not directly specified in the text.

News Publication Date: 10-May-2026.

Web References:

https://www.nature.com/articles/s42256-026-01232-w

DOI: 10.1038/s42256-026-01232-w

Method of Research: Literature review.

Keywords

Artificial intelligence, Protein engineering, Explainable AI, Protein folding, Catalysis, Machine learning, Biological insight, Protein design, Biotechnological innovation, Transparency in AI, Model interpretability, Computational biology.

Tags: AI interpretability in biotechnologyblack box AI challenges protein designCRG research on explainable protein AIenvironmental impact of AI-designed proteinsexplainable AI in protein engineeringexplainable machine learning for proteinsprotein catalyst design AIprotein engineering with AI modelsprotein language models explainabilityprotein sequence prediction safetysafe AI applications in protein engineeringtransparent AI for protein design