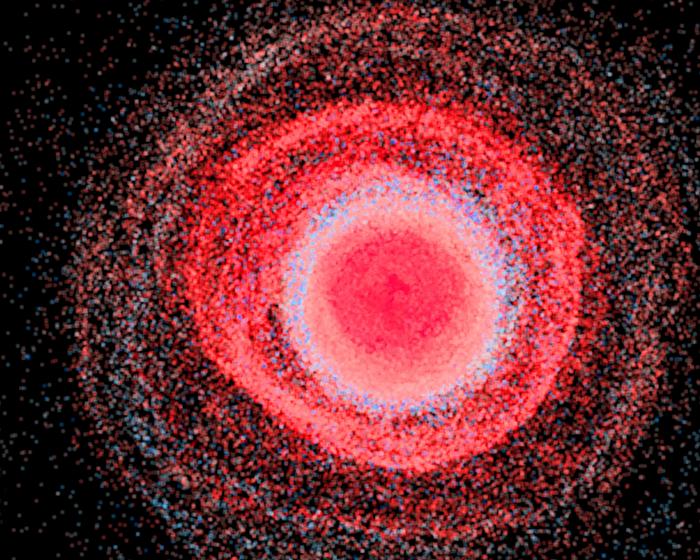

The origin of heavy elements in our universe is theorized to be the result of neutron star collisions, which produce conditions hot and dense enough for free neutrons to merge with atomic nuclei and form new elements in a split-second window of time. Testing this theory and answering other astrophysical questions requires predictions for a vast range of masses of atomic nuclei. Los Alamos National Laboratory scientists are front and center in using machine learning algorithms (an application of artificial intelligence) to successfully model the atomic masses of the entire nuclide chart — the combination of all possible protons and neutrons that defines elements and their isotopes.

Credit: Los Alamos National Laboratory

The origin of heavy elements in our universe is theorized to be the result of neutron star collisions, which produce conditions hot and dense enough for free neutrons to merge with atomic nuclei and form new elements in a split-second window of time. Testing this theory and answering other astrophysical questions requires predictions for a vast range of masses of atomic nuclei. Los Alamos National Laboratory scientists are front and center in using machine learning algorithms (an application of artificial intelligence) to successfully model the atomic masses of the entire nuclide chart — the combination of all possible protons and neutrons that defines elements and their isotopes.

“Many thousands of atomic nuclei that have yet to be measured may exist in nature,” said Matthew Mumpower, a theoretical physicist and co-author on several recent papers detailing atomic masses research. “Machine learning algorithms are very powerful, as they can find complex correlations in data, a result that theoretical nuclear physics models struggle to efficiently produce. These correlations can provide information to scientists about ‘missing physics’ and can in turn be used to strengthen modern nuclear models of atomic masses.”

Simulating the rapid neutron-capture process

Most recently, Mumpower and his colleagues, including former Los Alamos summer student Mengke Li and postdoc Trevor Sprouse, authored a paper in Physics Letters B that described simulating an important astrophysical process with a physics-based machine learning mass model. The r process, or rapid neutron-capture process, is the astrophysical process that occurs in extreme environments, like those produced by

neutron star collisions. Heavy elements may result from this “nucleosynthesis”; in fact, half of the heavy isotopes up to bismuth and all of thorium and uranium in the universe may have been created by the r process.

But modeling the r process requires theoretical predictions of atomic masses currently beyond experimental reach. The team’s physics-informed machine-learning approach trains a model based on random selection from the Atomic Mass Evaluation, a large database of masses. Next the researchers use these predicted masses to simulate the r process. The model allowed the team to simulate r-process nucleosynthesis with machine-learned mass predictions for the first time — a significant feat, as machine learning predictions generally break down when extrapolating.

“We’ve shown that machine learning atomic masses can open the door to predictions beyond where we have experimental data,” Mumpower said. “The critical piece is that we tell the model to obey the laws of physics. By doing so, we enable physics-based extrapolations. Our results are on par with or outperform contemporary theoretical models and can be immediately updated when new data is available.”

Investigating nuclear structures

The r-process simulations complement the research team’s application of machine learning to related investigations of nuclear structure. In a recent article in Physical Review C selected as an Editor’s Suggestion, the team used machine learning algorithms to reproduce nuclear binding energies with quantified uncertainties; that is, they were able to ascertain the energy needed to separate an atomic nucleus into protons and neutrons, along with an associated error bar for each prediction. The algorithm thus provides information that would otherwise take significant computational time and resources to obtain from current nuclear modeling.

In related work, the team used their machine learning model to combine precision experimental data with theoretical knowledge. These results have motivated some of the first experimental campaigns at the new Facility for Rare Isotope Beams, which seeks to expand the known region of the nuclear chart and uncover the origin of the heavy elements.

Papers: “Atomic masses with machine learning for the astrophysical r process,” Physics Letters B. DOI: 10.1016/j.physletb.2023.138385

“Bayesian averaging for ground state masses of atomic nuclei in a Machine Learning approach,” Frontiers in Physics. DOI: 10.3389/fphy.2023.1198572

“Physically interpretable machine learning for nuclear masses,” Physical Review C. DOI: 10.1103/PhysRevC.106.L021301

“Nuclear masses learned from a probabilistic neural network,” Physical Review C. DOI: 10.1103/PhysRevC.106.014305

Funding: This work was supported by the Laboratory Directed Research and Development program at Los Alamos and by the NASA Emerging Worlds program.

Journal

Physics Letters B

DOI

10.1016/j.physletb.2023.138385

Article Title

Atomic masses with machine learning for the astrophysical r process

Article Publication Date

1-Jan-2024