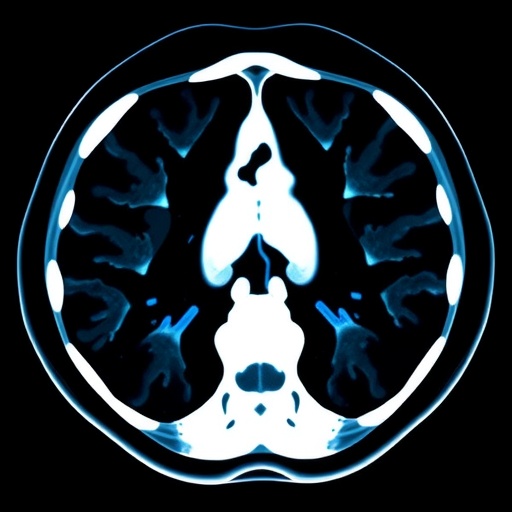

In a groundbreaking advancement poised to revolutionize medical imaging, a research team funded by the National Institutes of Health (NIH) has unveiled Merlin, a versatile machine learning model designed to deepen and expand the insights gleaned from computed tomography (CT) scans. This cutting-edge model transcends traditional imaging applications by integrating vast amounts of data to perform a sweeping array of diagnostic and prognostic tasks. Merlin’s capacity to seamlessly interpret complex 3D abdominal CT scans marks a pivotal step towards automating and enhancing the nuanced field of radiological assessment with unprecedented precision.

Merlin represents a new paradigm in artificial intelligence within medical imaging—unifying vast, unlabeled datasets through the application of foundation models. Unlike conventional approaches restricted to narrowly defined tasks, Merlin’s training employed an extensive and unique dataset encompassing more than 15,000 clinically annotated 3D abdominal CT scans paired with corresponding radiology reports and nearly one million diagnosis codes. This expansive trove emanates from the Stanford University School of Medicine, forming the most comprehensive abdominal CT database assembled to date, thus enabling Merlin to learn sophisticated relationships between visual imaging and textual medical knowledge.

The strength of Merlin stems from its innovative architecture which facilitates the fusion of complex three-dimensional scan data with the semantic richness of natural language reports. This integration empowers the model to undertake over 750 distinct tasks, ranging from elementary anatomical delineation to the intricate prediction of disease development years before clinical manifestation. By harnessing multi-modal inputs during training, Merlin effectively bridges the gap between raw imaging data and diagnostic interpretation, a task that conventionally requires expert human radiologists supported by multiple rounds of clinical testing and evaluation.

Merlin’s performance was rigorously evaluated by challenging the model with over 50,000 previously unseen abdominal CT scans sourced from four independent hospitals. The model exhibited extraordinary proficiency in correlating imaging findings with human-generated diagnostic labels and conclusions. For example, Merlin’s ability to predict relevant ICD codes associated with individual scans surpassed other contemporary AI tools, achieving greater than 81% accuracy across a broad suite of diagnostic labels and peaking at 90% accuracy within certain disease subsets. These results underscore Merlin’s potential as a reliable clinical assistant in routine radiological workflows.

Beyond retrospective diagnostic tasks, Merlin demonstrates a remarkable capacity for forecasting future disease trajectories. In predictive tests focusing on chronic diseases—such as diabetes, osteoporosis, and cardiovascular illnesses—the model effectively identified individuals at elevated risk years before the clinical onset of disease based solely on their abdominal CT scans. Specifically, Merlin’s predictive accuracy reached 75%, outperforming comparator models operating at 68%. This ability suggests the presence of subtle imaging biomarkers, heretofore unnoticed by human experts, which Merlin is uniquely equipped to detect and interpret.

A particularly compelling facet of Merlin’s versatility is its adaptability to imaging domains outside its initial training data. Despite being exclusively trained on abdominal CT scans, Merlin was tasked with interpreting chest CT images—a domain with divergent anatomical and pathological features. Impressively, Merlin matched or exceeded the diagnostic performance of models specifically trained on chest imaging data, further evidencing its generalizability and the power of foundational learning approaches within medical AI.

Although Merlin is a “jack-of-all-trades,” competing with specialized models tailored for individual diagnostic tasks, it consistently matched or outperformed these experts. This comprehensive capability cultivates excitement for integrating Merlin into clinical practice not merely as a supplemental tool but potentially as a primary diagnostic aid. Its ability to reduce reliance on scarce radiological expertise may alleviate burgeoning physician shortages while streamlining diagnostic workflows, thereby accelerating patient care and treatment initiation.

Despite these advances, some tasks such as drafting complete radiology reports from scratch remain challenging and require further refinement of Merlin’s learning algorithms and fine-tuning with more targeted datasets. The research team advocates for continuous model refinement through domain-specific customization, encouraging practitioners to augment Merlin with local clinical data to enhance performance tailored to specialized clinical environments or demographic variations.

At its core, Merlin epitomizes a leap forward in multi-modal artificial intelligence research—combining the raw spatial complexity of volumetric CT data with the semantic depth inherent in diagnostic narratives. This confluence enables the model to understand and predict disease with a degree of nuance unattainable by previous generation AI systems. The synergy between data scale, model design, and diverse task demands positions Merlin as a foundational tool upon which future medical imaging innovations can be built.

This research, supported by several NIH institutes under multiple grants, also marks a pivotal collaboration between AI researchers and clinical scientists. It illuminates the potential for AI-driven tools not only to automate routine image analysis but also to reveal new medical insights, transforming radiology from a solely human-driven discipline into a synergistic human-machine partnership.

As the community begins to adopt and build upon Merlin, the implications span beyond immediate clinical applications. The model’s capacity to identify subtle patterns invisible to human eyes fuels optimism about discovering novel imaging biomarkers. Such biomarkers could inaugurate new frontiers in understanding disease pathophysiology, risk stratification, and personalized medicine, reshaping the landscape of preventative healthcare.

Ultimately, Merlin heralds a future where the integration of advanced AI models streamlines clinical decision-making, enhances diagnostic accuracy, and expands the role of medical imaging in health management. As senior author Akshay Chaudhari from Stanford University aptly noted, this foundational AI model is poised to be a robust backbone for the broader medical community, and from this platform, the potential applications are bound only by the limits of innovation itself.

Subject of Research: Medical imaging and machine learning application in computed tomography (CT) scan analysis.

Article Title: Merlin: A Computed Tomography Vision Language Foundation Model and Dataset

News Publication Date: 4-Mar-2026

Web References:

https://www.nature.com/articles/s41586-026-10181-8

References:

Louis Blankemeier, Ashwin Kumar, et al. Merlin: A Computed Tomography Vision Language Foundation Model and Dataset. Nature. 2026 DOI: 10.1038/s41586-026-10181-8.

Keywords

Health and medicine, Artificial intelligence, Medical imaging, Clinical imaging

Tags: 3D abdominal CT scan interpretationadvanced diagnostic algorithmsAI-powered CT scan analysisartificial intelligence for precision medicineautomated radiological assessmentclinical diagnosis with AIfoundation models in healthcareintegration of radiology reports and imaginglarge-scale medical imaging datasetsmachine learning in medical imagingNIH-funded AI researchStanford University medical imaging database