In the swiftly advancing domain of automotive technology, vision sensing stands out as a transformative element driving the future of intelligent transportation. A recent comprehensive study, “Vision Sensing for Intelligent Driving: Technical Challenges and Innovative Solutions,” published in Engineering, reveals an intricate overview of current technologies and pioneering methods to elevate vision sensing capabilities for autonomous vehicles. This work is the collaborative achievement of Xinle Gong and Zhihua Zhong, esteemed experts from top Chinese institutions specializing in mechanical and automotive engineering.

Vision sensing serves as the cornerstone for autonomous driving systems, providing real-time, high-fidelity data crucial for navigating complex road environments. Unlike traditional sensors such as radar and lidar, vision sensors—primarily cameras—deliver rich visual information that enables detailed understanding of dynamic surroundings, including recognizing traffic signals, pedestrians, and subtle road anomalies. This comprehensive perception is indispensable for executing split-second decisions within automated driving frameworks, ultimately ensuring safety and driving efficiency.

However, despite the tremendous potential of vision sensors, automotive-grade camera systems face uniquely rigorous demands compared to consumer or industrial cameras. These sensors must continuously perform under diverse and often harsh environmental conditions, including varying illumination levels from glaring sunlight to nighttime darkness, as well as adverse weather such as rain, fog, and dust. This necessitates highly robust hardware designs paired with advanced imaging algorithms capable of compensating for such variability without sacrificing real-time responsiveness.

One significant hurdle lies in optical lens design, where physical constraints such as depth of field and aperture impose limits on image clarity and detail capture. Conventional lenses are susceptible to degradation in image quality when transitioning between high-contrast lighting scenarios—conditions prevailing in typical driving environments. Furthermore, wide-angle lenses frequently employed for broader field of view introduce optical distortions like barrel or pincushion effects, which can severely distort the perceived location and size of objects, thus complicating accurate spatial mapping and hazard prediction.

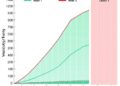

The performance of CMOS (complementary metal-oxide-semiconductor) image sensors, central to automotive vision systems, is similarly challenged by inherent trade-offs between spatial resolution and frame rate. Increasing resolution entails capturing finer image details but generates enormous data throughput, which can overwhelm the vehicle’s processing units and diminish temporal resolution, impairing the detection of fast-moving objects. Moreover, current sensors typically achieve dynamic ranges around 120 to 140 dB, restricting their ability to manage scenes with extreme lighting contrasts, such as tunnels exiting into bright daylight. Reduced pixel sizes—necessary for higher resolution—further lower saturation charge capacity, limiting the sensor’s sensitivity to subtle variations in luminous intensity.

Image signal processors (ISPs), tasked with converting raw pixel data into usable images, also experience bottlenecks. Contemporary ISPs are often constrained by on-chip resource limitations and lack sufficient parallelism, restricting their throughput and delaying image processing, which is critical for real-time vehicle response. Additionally, noise generated in low-light scenarios presents ongoing challenges, as existing algorithms for noise suppression and signal enhancement must evolve to maintain image integrity without compromising vital visual cues.

Addressing these multifaceted challenges demands radical new approaches. The authors spotlight emerging photosensitive materials such as quantum dots and perovskites, known for their superior light absorption and charge transport efficiencies, as promising candidates to dramatically enhance sensor performance. These materials could revolutionize the photoelectric conversion process, enabling cameras to sense a broader spectrum of light with higher sensitivity and lower noise.

Moreover, bio-inspired designs, particularly those modeling the human visual system’s layered processing architecture, hold potential to extend dynamic ranges and enable ultra-high-speed vision. Mimicking complex retinal and neural mechanisms may allow for efficient adaptive exposure controls and rapid scene analysis, overcoming current technological deficiencies. In parallel, integrating neuromorphic computing paradigms—systems that emulate neural networks and brain-like parallelism—could facilitate drastically reduced energy consumption and accelerated image processing tailored for the demands of autonomous driving.

Quantum computing and its associated algorithms offer another frontier, providing frameworks for handling massive datasets with unparalleled efficiency and robustness. The fusion of these avant-garde computational methods with novel sensor technologies promises a new era of vision systems capable of real-time, context-aware perception in multifarious driving conditions. This evolution is vital to achieving full vehicular autonomy where split-second judgement and environmental comprehension are non-negotiable.

The article’s in-depth technical exposition demarcates the path forward for researchers aiming to transcend current barriers. By harnessing breakthroughs in materials science, optical engineering, and artificial intelligence, vision sensing can advance towards unprecedented levels of intelligence and reliability. This trajectory aligns not only with enhancing individual vehicle capabilities but also with broader smart transportation ecosystems envisioned for safer, more efficient urban mobility.

Beyond engineering challenges, the seamless integration of advanced vision sensors into vehicles requires innovative packaging and system designs, ensuring compactness, heat dissipation, and electromagnetic compatibility without impairing optical performance. The coalescence of multidisciplinary expertise—encompassing optics, electronics, software, and mechanical engineering—is essential to actualize these high-performance vision modules suitable for mass-market deployment.

In conclusion, the promising avenues revealed by Gong and Zhong punctuate a transformative phase in automotive vision sensing. Their elucidation of technical obstacles combined with imaginative solutions underscores the dynamic interplay between fundamental research and applied technology. As the automotive industry inexorably progresses towards fully autonomous driving, advanced vision sensors fortified by cutting-edge scientific insights will undoubtedly be key enablers of this intelligent revolution on wheels.

Article Title: Vision Sensing for Intelligent Driving: Technical Challenges and Innovative Solutions

News Publication Date: 17-Feb-2026

Web References:

https://doi.org/10.1016/j.eng.2025.06.038

https://www.sciencedirect.com/journal/engineering

Image Credits: Xinle Gong, Zhihua Zhong

Keywords

Automotive engineering, vision sensing, intelligent driving, autonomous vehicles, CMOS image sensors, optical lenses, dynamic range, image processing, neuromorphic computing, quantum dots, perovskites, advanced materials

Tags: advanced driver-assistance systems (ADAS)automotive-grade camera systemschallenges in vision sensing technologyenvironmental robustness of vision sensorshigh-fidelity visual data for intelligent transportationinnovative solutions for intelligent drivingovercoming harsh weather conditions in vision sensingpedestrian detection in autonomous vehiclesperception systems for self-driving carsreal-time data processing in autonomous drivingvision sensing for autonomous vehiclesvision-based traffic signal recognition