In a groundbreaking advancement set to revolutionize prostate imaging, researchers have unveiled a novel object detection method designed to significantly enhance the accuracy and efficiency of locating the prostate gland within surface-based abdominal ultrasound images. This latest approach, detailed by Bennett, Barrett, Gnanapragasam, and colleagues in their forthcoming 2025 paper published in Communications Engineering, promises to address longstanding challenges in ultrasound-based prostate imaging, a field that has historically struggled with issues of image clarity and precise organ localization.

Ultrasound imaging is a cornerstone of medical diagnostics, especially in urology, where it is extensively used to evaluate the prostate for conditions such as benign prostatic hyperplasia and prostate cancer. Traditional ultrasound techniques, however, suffer from limitations including speckle noise, low contrast resolution, and anatomical variability across patients. These factors complicate the prostate’s visualization, leading to potential inaccuracies during diagnosis and treatment planning. The innovative object detection framework introduced by the research team aims to mitigate these limitations by automatically identifying the prostate gland’s position, thereby guiding clinicians more reliably.

The core technology utilizes advanced deep learning algorithms, a subset of artificial intelligence (AI), trained on extensive datasets of abdominal ultrasound images. This AI-driven model extracts complex visual patterns and anatomical features that escape conventional imaging assessment by human operators. By integrating convolutional neural networks (CNNs) optimized for surface-based imaging data, the system adapts to variations in patient anatomy and operator technique, providing consistent and reproducible localization results.

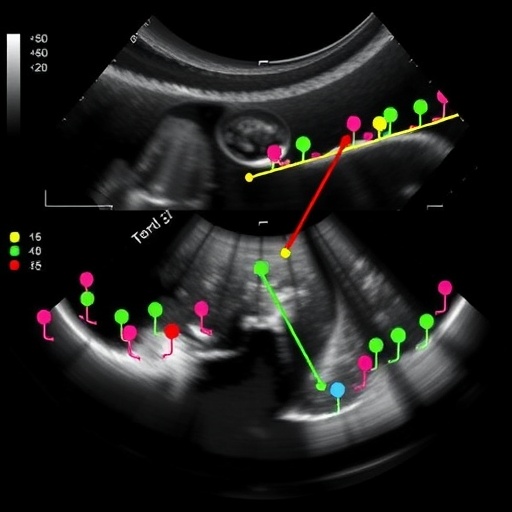

Underpinning this approach is a multi-stage processing pipeline. Initially, raw ultrasound images undergo preprocessing to enhance signal quality and suppress noise artifacts. Enhanced images then enter the object detection network, where candidate regions potentially containing the prostate are proposed. These proposals undergo rigorous refinement and classification to precisely delineate the gland’s boundaries. The end result is a heatmap-based output highlighting the prostate’s location, ready to be overlaid seamlessly onto the original ultrasound scan.

One of the notable challenges addressed by the authors involves the inherent difficulty of surface-based ultrasound, which captures images through the abdominal wall, often leading to compromised image quality due to tissue heterogeneity and depth variability. Unlike transrectal ultrasound—a more invasive but clearer imaging modality—surface-based ultrasound is non-invasive and more comfortable for patients, but requires sophisticated image analysis to interpret accurately. The researchers’ method effectively compensates for these obstacles, opening up new avenues for non-invasive prostate assessment.

Furthermore, the incorporation of object detection techniques represents a paradigm shift in prostate imaging. Traditionally, prostate localization relied on manual annotations by radiologists or semi-automated image segmentation methods that are often time-consuming and subject to inter-operator variability. Automated object detection leverages pattern recognition capabilities to localize organs without extensive user intervention, reducing observer bias and accelerating clinical workflows.

Clinical implications of this research are profound. By enhancing the precision of prostate localization, this technology could improve the targeting of biopsies, ensuring that suspicious tissue is sampled accurately. It also holds promise for better guidance during treatments like high-intensity focused ultrasound (HIFU) and brachytherapy, where precise knowledge of the gland’s location is critical for delivering therapeutic energy while sparing surrounding tissues.

Importantly, the team’s evaluation methodology included rigorous validation against ground-truth data derived from expert annotations and imaging modalities with higher spatial resolution, such as MRI. Quantitative metrics demonstrated significant improvements in detection accuracy and consistency compared to existing ultrasound-based localization strategies. This empirical evidence underscores the robustness and potential clinical readiness of the proposed method.

Another significant contribution is the algorithm’s capacity to generalize across diverse patient populations. Recognizing the variability in prostate size, shape, and position among individuals, the researchers curated a diverse training dataset encompassing various demographics and clinical conditions. This inclusivity promotes equitable diagnostic performance and prevents algorithmic bias, a vital consideration in AI development for healthcare.

Moreover, the authors also explored the interpretability of their deep learning model. By employing visualization techniques such as class activation mapping, they provided insights into which ultrasound features were most informative for the detection task. This transparency bolsters clinician trust and facilitates integration into clinical practice, where understanding AI decision-making processes remains a critical step for adoption.

The fusion of object detection with surface-based abdominal ultrasound represents a leap toward smarter and more intuitive diagnostic tools. The ease of incorporating this technology into existing ultrasound systems means that hospitals and clinics can potentially upgrade their capabilities without heavy infrastructure changes. This ease of integration is notable given the burgeoning emphasis on cost-effective healthcare innovations that do not disrupt established clinical routines.

Looking ahead, the research team envisions extending their approach to real-time applications, enabling live image analysis during ultrasound examinations. Such real-time feedback would empower sonographers to make immediate adjustments and capture optimal images, further enhancing diagnostic accuracy and patient outcomes. Additionally, merging prostate localization with automated lesion detection could create a comprehensive AI pipeline for prostate cancer screening and management.

Despite these promising developments, the authors acknowledge challenges that warrant further investigation. For example, achieving high detection accuracy in cases with severe calcifications or post-surgical anatomical alterations remains demanding. Also, expanding the dataset size and diversity through multi-center collaborations would strengthen the model’s generalizability and robustness.

This pioneering work not only exemplifies the potential of AI-driven image analysis but also underscores a broader trend in medical imaging toward non-invasive, patient-friendly diagnostics augmented by cutting-edge computational methods. The transformative potential of object detection in ultrasound—traditionally considered less sophisticated than modalities like MRI—suggests a future where augmented imaging tools democratize access to advanced diagnostics globally.

In conclusion, Bennett and colleagues have presented an innovative, technically advanced object detection framework that significantly refines prostate localization in surface-based abdominal ultrasound images. Their work exemplifies the intersection of machine learning and medical imaging and offers a practical solution to longstanding clinical challenges. As adoption of such AI technologies increases, the landscape of urological diagnostics and treatment guidance stands on the brink of a revolutionary shift, promising better patient experiences and improved clinical outcomes.

Subject of Research: Prostate localization in surface-based abdominal ultrasound images using AI-driven object detection techniques.

Article Title: Object detection as an aid for locating the prostate in surface-based abdominal ultrasound images.

Article References:

Bennett, R.D., Barrett, T., Gnanapragasam, V.J. et al. Object detection as an aid for locating the prostate in surface-based abdominal ultrasound images. Commun Eng (2025). https://doi.org/10.1038/s44172-025-00550-y

Image Credits: AI Generated

Tags: addressing limitations in prostate ultrasoundartificial intelligence in ultrasound diagnosticsautomated prostate identification methodschallenges in ultrasound image claritydeep learning algorithms for prostate imagingenhancing accuracy in ultrasound prostate evaluationfuture of prostate imaging technologyimproving ultrasound imaging efficiencyinnovations in urology diagnosticsobject detection in medical imagingprostate cancer imaging advancementsprostate localization techniques in ultrasound